Centro de Neurociencia Social y Cognitiva - UAI

Últimas Investigaciones

When alertness fades: Drowsiness-induced visual dominance and oscillatory recalibration in audiovisual integration

Felipe Rojas

Neurociencia Social

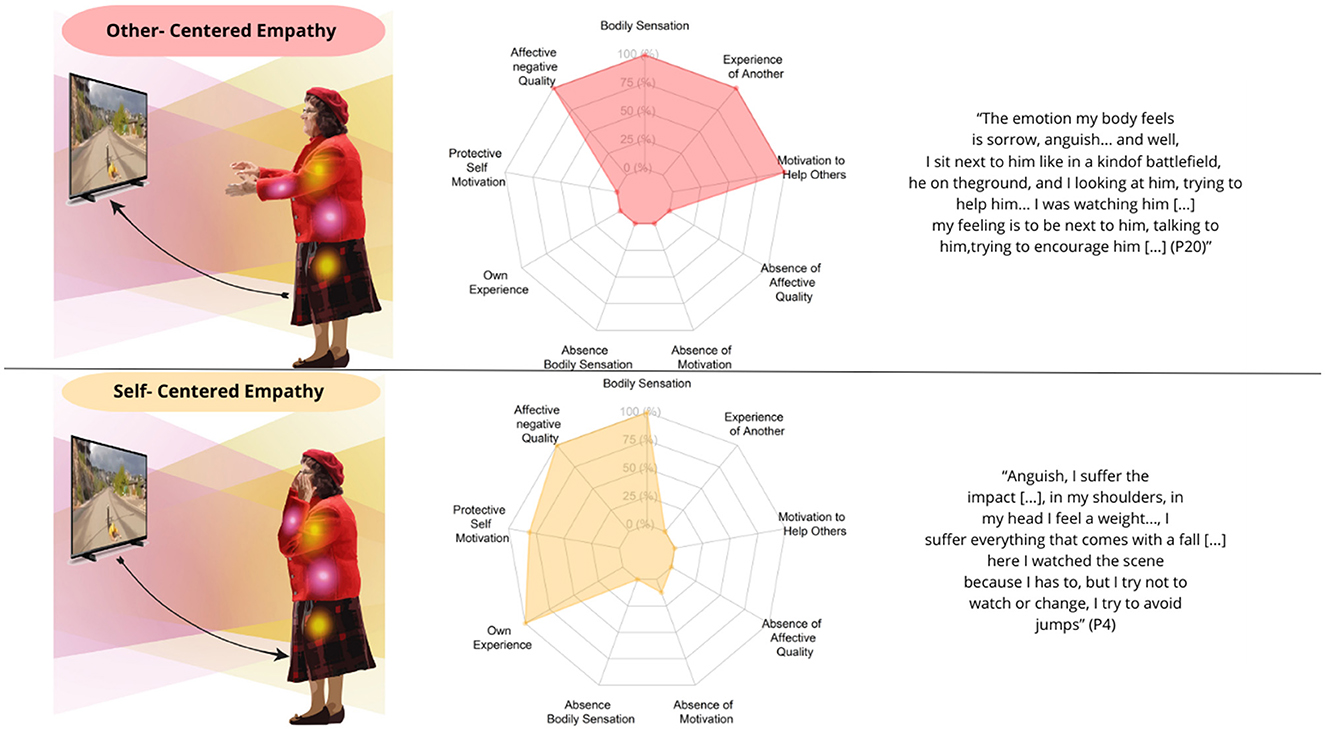

SABER +From disconnection to compassion: a phenomenological exploration of embodied empathy in a face-to-face interaction

Alejandro Troncoso, Antonia Zepeda, Vicente Soto, Ellen Riquelme, David Martínez-Pernía

Neurociencia Social

SABER +The spectrum of embodied intersubjective synchrony in empathy: from fully embodied to externally oriented engagement in Parkinson's disease

Antonia Zepeda, Alejandro Troncoso, Daniela Pizarro, Constanza Baquedano, Kevin Blanco, David Martínez Pernía

Neurociencia Social

SABER +Breaking the chains of independence: A Bayesian uncertainty model of normative violations in human causal probabilistic reasoning

Sergio Chaigneau

Neurociencia Social

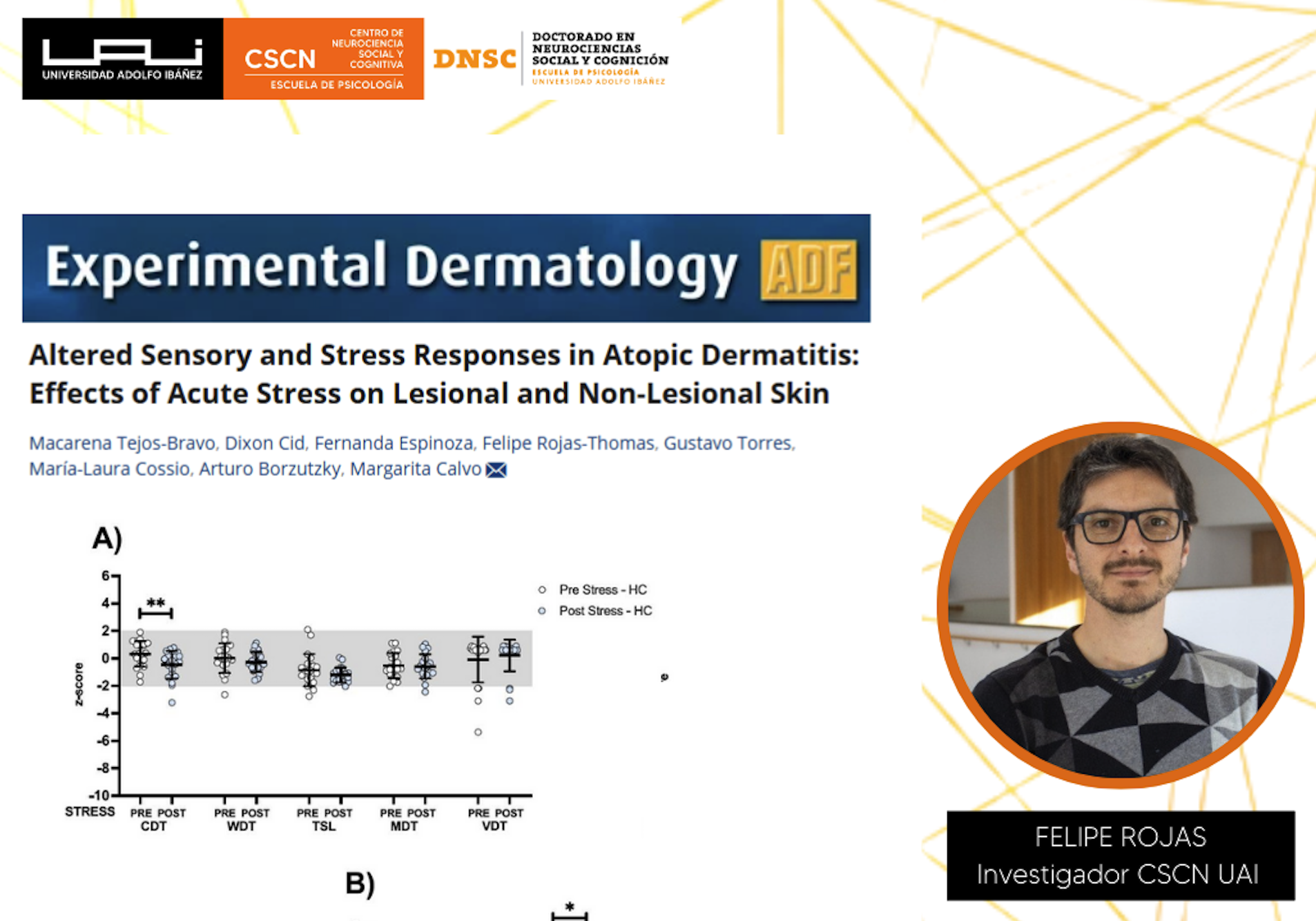

SABER +Altered Sensory and Stress Responses in Atopic Dermatitis: Effects of Acute Stress on Lesional and Non-Lesional Skin

Felipe Rojas

Neurociencia Social

SABER +.png)

-(2).png)

-(1).png)